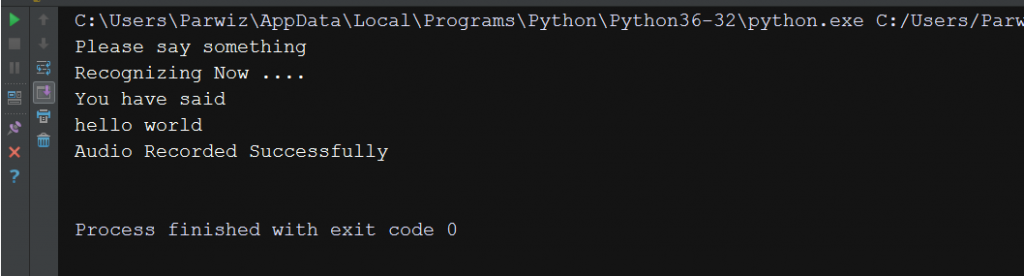

Google speech to text api python7/24/2023 For Linux (Ubuntu 20.04), we do not need any IDE. For windows, we will use Visual Studio, or you can use any other Python IDE. FLAC encoder is required only if the system is not x86-based Windows/Linux/OS X.įor this project, we will show how to set up a speech recognition project for two different environments, Windows and Linux.Google API Client Library for Python is required only if you need to use the Google Cloud Speech API.PocketSphinxis required only if you need to use the Sphinx recognizer.PyAudio is required if you want to use the microphone input.We will be using python 3.3+ for this tutorial. Pythonis required and python versions 2 and 3 are all compatible with SpeechRecognition, although Python 2 installation involves some extra procedures.Set up a Speech Recognition Project using Python SpeechRecognition Library Prerequisites This is why for the simplicity of this tutorial, we will be using SpeechRecognition. SpeechRecognition makes it very simple to retrieve the audio input needed to recognize speech. SpeechRecognition is one tool that stands out because it is easy to use for a beginner and has compatibility with many available speech recognition APIs. – Simple to Develop, Debug, Execute, and Deploy APIs.Īlthough some of these tools offer integrated capabilities that go beyond simple voice recognition, such as natural language processing for determining a speaker’s intent, others emphasize only on the conversion of speech to text. – Supports Continuous Speech Recognition. – Easy Integration into Developer Applications. – Offers Simple Speech-to-Text API service. – Support for Several Engines and APIs, Online and Offline. Installing a voice recognition package for Python is required in order to conduct speech recognition in Python.Ī variety of python speech recognition packages are available online, for example, apiai, SpeechRecognition, Google-cloud-speech, pocketsphinx and watson-developer-cloud. Some of the famous toolkits are CMU Sphinx, Kaldi, Julius, and HTK. There are several speech recognition toolkits and libraries that one can use to build speech recognition systems. It employs a probability statistic to reveal the inner statistic regulars. N-gram is a basic and efficient statistical language model that is extensively used. The language model calculates word probability from speech, which is divided into a statistical model and a rule model. The acoustic model’s task is to predict which sound or phoneme is pronounced at each speech segment. In voice recognition, the acoustic model generally uses a hidden Markov model (HMM). The acoustic model calculates syllable probability from speech.

The speech recognizer then estimates “the most likely word sequence W* for given acoustic observations based on a set of parameters of the underlying model”. The extraction and identification of the acoustic feature include information compression and signal de-convolution procedures.īased on the features extracted, a set of acoustic observations X is generated given a sequence of words W. Speech recognition relies heavily on the extraction and identification of acoustic features. Feature extraction involves applying various signal processing techniques to enhance the quality of the input signal and transform input audio from the time domain to the frequency domain.Ī coustic feature extraction, the acoustic model, and the language model are all part of automatic speech recognition (ASR). In a typical speech recognition system, the first step is feature extraction from the input speech. The figure shows the block diagram of a typical ASR system.

Pronunciation, accent, pitch, amplitude, and background noise are all characteristics that might affect word error rates. ASR systems are evaluated on their accuracy rate, i.e.

A typical ASR system converts spoken language into readable text using machine learning or artificial intelligence (AI) technologies. Despite being sometimes mistaken with voice recognition, speech recognition focuses only on converting speech from a verbal to a written format, whereas voice recognition simply aims to recognize the voice of a certain person.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed